Fear not – this feature is coming! In this article we dig into the technical challenges in implementing it. Examining this question reveals the fundamental design philosophy in Zeebe, the workflow engine powering Camunda Platform 8.

Update 1/2024: We told you not to fear—and now this feature is here! You can now delete process definitions in Camunda 8. See this page in our documentation for more on how to do it.

One of the most common questions asked about Camunda Platform 8 is “why can’t I delete process definitions?”

If we look at the motivations for process definition deletion, one is the situation where Operate, the process management interface, is filling up with process definitions – perhaps due to testing. Scrolling through a bunch of them is troublesome. Wouldn’t it be easier to get rid of them?

Another motivation is data retention. What happens if a customer requests that all of their data is scrubbed from a Camunda Platform 8 system? Can that be done?

A third motivation is to stop a process definition with a timer start event from spawning new process instances.

This feature is available in Camunda Platform 7, so users frequently expect this feature to also be present in Camunda Platform 8, and are confused by its absence (examples from the Forum: 1, 2, 3). It is counter-intuitive.

On the face of it, it seems ridiculous:

How can you not delete process definitions???

Is it a conspiracy by Big BPMN to charge extra for the full set of CRUD operations?

How is such a basic feature missing?

Zeebe and Camunda Platform 8 are based on simple but powerful elements: replicated event stream, stream processing, replicated snapshots of computed state, exported event stream, computed historical state from event stream.

Implementing the deletion of process definitions – while on the surface a simple user story – requires complex behavior across multiple components in the system.

To understand why it’s not a simple operation to implement, we need to understand the radical innovation of Zeebe, the workflow engine that powers Camunda Platform 8.

Zeebe: “No More Relational Database”

With Zeebe, we have done away with the relational database.

You may have heard this previously – it’s an oft-repeated statement that almost becomes a slogan. But what does it mean?

In Camunda Platform 7, the engine uses a relational database to maintain the state of the engine and process instances. Movement of a process instance through the process definition is implemented by mutation of database rows.

Remember that a process is “the directed evolution of state over time”. Here, we deal with two of the most tricky parts of any computer system: state and time.

An obvious way to implement this system is to mutate state in a database.

There are several issues with this.

One is the bottleneck introduced by a database. Row locking, transactions, and resource contention make a database a performance bottleneck in a system.

The obvious way to deal with that is to use a web-scale database, like MongoDB.

Yes, I do have my tongue-in-cheek when I say that, and yes, we did try it – an early investigation used a sharded, noSQL database – Apache Cassandra.

Another issue is the stateful nature of a database. It is a poor match for the reschedulable ephemeral containers and reproducible infrastructure of Kubernetes. The content of a database is inherently stateful.

Eventually, we did away with the database altogether by switching from database row (state) mutation, to stream processing (event sourcing and state reduction.)

Stream Processing as a Paradigm

With stream processing, an event stream is generated and a stream processor acts on commands in the stream and writes an acknowledgement that the command has been acted on. This allows the state of the system to be computed by reducing the stream events.

Why do this? Because it allows a distributed system to be built, one that can scale horizontally more efficiently than one with a centralized database.

Philipp Ossler, one of the core Zeebe engineers, gave a great presentation on it at the recent Camunda Summit: Building a workflow engine based on stream processing.

But how does that lead to deleting a process definition being an issue?

Let’s look.

With Zeebe, brokers are clustered for replication (fault-tolerance / high availability) and partitioning (high performance.) These are orthogonal concerns. Partitions are distributed across the cluster to allow the resources of the cluster nodes to be brought to bear on the workflow. Partitions are replicated across the cluster to ensure that a single node going down does not cause data loss.

Commands for a partition, such as “create process instance,” “activate jobs,” and “enter flow node,” are written to the event stream on a broker. The event stream is replicated across one or more brokers, but only one broker is computing the current state for a partition (while replicating others.) The broker computing the current state also replicates a snapshot of the current state periodically. This enables another node, in the event of a fail-over, to compute the current state of the partition by taking the last snapshot and then replaying the event stream from that point.

Now, we said that we did away with the relational database, but we still have to maintain state somewhere. There is a key-value store used to maintain state – RocksDB. But it is completely internal to the broker. It is used for holding the computed state of a partition. It is also inaccessible from outside the broker. It is a black box.

When an event has been consumed and included in a snapshot, it is removed from the event stream. When a process instance has completed, all data related to its state is removed from the internal state store.

How we query the system state in Camunda Platform 8

Now, if the internal state of a broker is inaccessible, how is it possible to inspect running processes?

And if all state data related to a process instance is deleted when the process completes, then where is any historical data about the system state stored – such as completed processes?

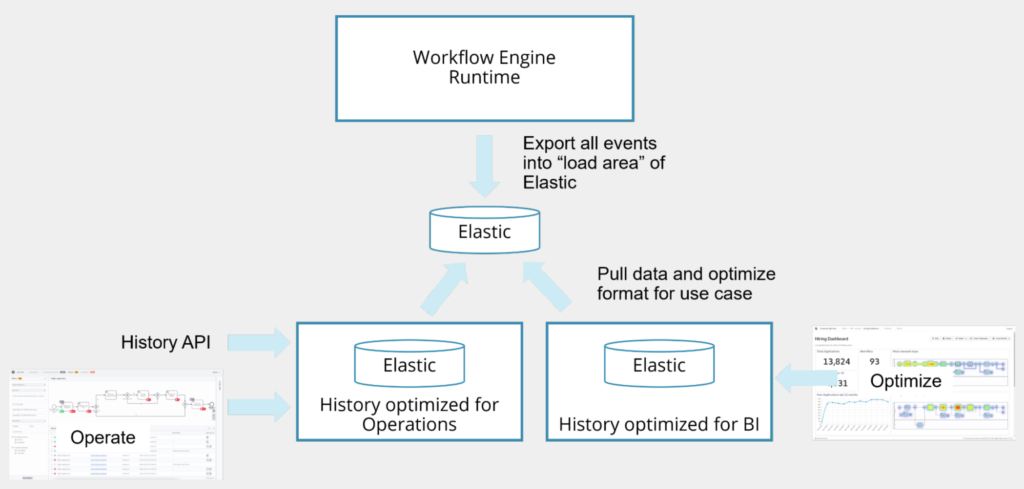

The answer to both of these questions is that the event stream is exported. Exporters can be loaded into a broker node at startup, and they receive notifications of events. In Camunda Platform 8, an ElasticSearch exporter is used to export events to ElasticSearch.

(Image)

Operate is the process instance management tool in the Camunda Platform 8 suite of components. Operate reads the events exported from the brokers in the cluster, and performs processing on the exported event stream to compute the state of process instances. This data is then stored in Operate’s own indices in ElasticSearch. Note that all process instance data – both running processes and completed processes – are historical data from the perspective of Operate.

Optimize is the Business Intelligence component in Camunda Platform 8, and it uses the same approach of building its own computed state optimized for reporting from the historical data exported to ElasticSearch.

So, why can’t I delete process definitions?

Now, let us return to the original question: “why can’t I delete process definitions?”

Let us continue our examination by asking a further question:

What do we actually mean when we say “delete a process definition”?

There are two aspects to it:

- How the system behaves when a client attempts to start an instance of the process definition.

- How historical views are presented to the user.

Let’s say that the system should throw an exception to the client, if the client attempts to start an instance of a process definition that has been deleted. It functions as if the process definition does not exist – because it doesn’t.

That seems pretty straightforward.

But, here are some of the considerations that need to be decided when implementing this feature:

- What about the historical data? What should deleting a process definition do about that? Unless we delete all historical instances of that process, we are not going to clean up Operate’s UI – particularly the drop-down to select a process definition.

- Should we filter the drop-down based on whether a process definition has been “deleted”?

- Does deleting a process definition delete all historical data related to it in Operate? Remember that we are looking at two different systems now. One is the running system in the broker cluster, and the other is the “computed view of history” in Operate.

- What would happen when we delete a process definition while there are running instances of that process? How would the display of those be handled in Operate?

- What does it mean to “delete a process definition” in a distributed system, where instances of that process may be running?

- What does it mean to “delete a process definition” in a historical view of the system state (which is what Operate is)?

These are complex questions.

Zeebe and Camunda Platform 8 are based on simple but powerful elements: replicated event stream, stream processing, replicated snapshots of computed state, exported event stream, computed historical state from event stream.

Adding behaviors that cross the boundaries of these elements introduces complexity into the system, and when we do that, we want to make sure that it is justified complexity.

Future plans and current workarounds

First of all – we intend to implement process definition deletion. And it is not available right now.

There is a way to “delete process instances” in Zeebe / Operate that makes sense for customer use-cases. One thing is clear: no obvious behavior emerges from the system itself, which is based on immutability. So the behavior needs to be driven by customer use-cases. We are looking at how we implement it. It will be complex, and we don’t want to go down a solution pathway without a strong sense that it is the most broadly useful one.

If you want to view the discussion about this feature request or add your use case to it, check out GitHub Issue #2908 in the Zeebe repository.

Given that deleting process definitions is not possible right now, you might consider that the problem you want to solve with process definition deletion needs to be addressed at another level.

Data retention may be granular at the cluster level. You blow away an entire cluster with its Operate instance to scrub customer data, and you don’t multi-tenant in the same cluster.

Too many process definitions cluttering up the Operate UI (especially from testing)? Maybe you run the tests on another cluster, or use an ephemeral cluster for testing and POCs.

Want to throw an exception when an instance of a deprecated process definition is started? At the moment, the workaround is to deploy a version of the process definition that raises an incident immediately when it is started. This is not ideal, but it’s the best thing right now.

For timer start events, similarly, the current workaround is to deploy a version of the process without the timer start event. This will override the previous version and prevent the spawning of new process instances.

Summary

We looked at the radically innovative architecture of Zeebe. We’ve reimagined the BPMN engine as a distributed stream processor providing resiliency and scalability. Certain operations become more complex due to the distributed nature of the system, and deleting process definitions is one of them. It’s not a conspiracy, it’s not an oversight, it’s implementing mutation on top of a system that is based on an immutable event stream – so it’s complex.

We plan to implement process definition deletion and we are working toward a solution that is an impedance match for the system architecture and also meets user needs.

In the meantime, there are several approaches you can take to work around the lack of process definition deletion, and a way to provide input to the eventual feature design.