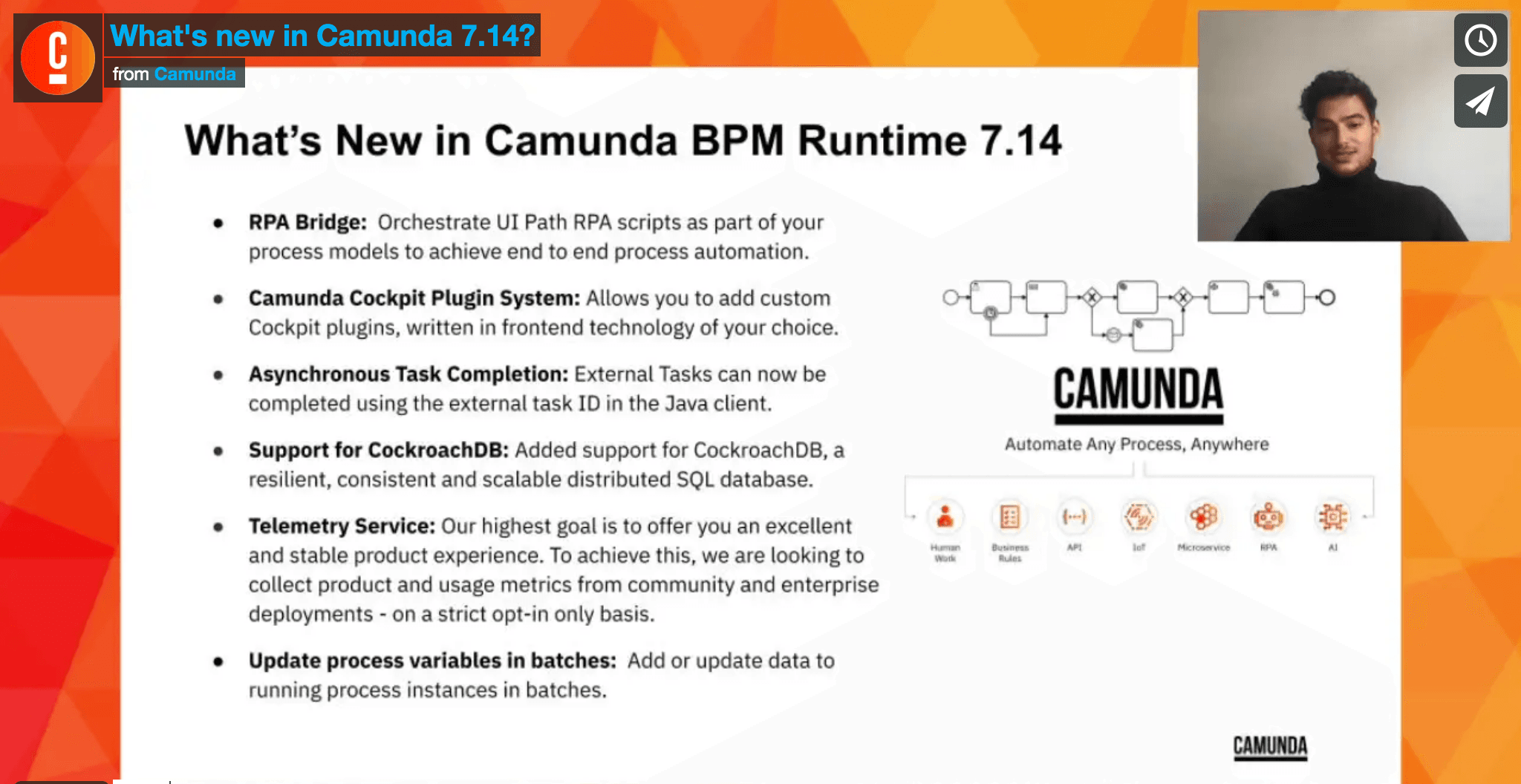

We are excited to announce the Camunda BPM Platform 7.14.0 is now available, providing you with new and powerful features.

Here are some of the highlights:

- RPA Orchestration with the RPA Bridge

- A Reworked Cockpit Plugin System

- Added Support for CockroachDB

- New Batch Operation: Variable Updates

- Extension Properties in External Tasks

- History Cleanup Job Logs Cleanup

- Added Open API Endpoints

- Updated Supported Environments

- Telemetry

You can download Camunda 7.14.0 or run it with Docker.

Also included in the release:

- Assert 8.0.0 for convenient testing of processes in Java

- Java External Task Client 1.4.0, which can be embedded in Java applications

You can read all about these releases in the dedicated blog post.

For a complete list of the changes, please check out the release notes and the list of known issues. For patched security vulnerabilities, see our security notices.

If you want to dig deeper, you can find the source code on GitHub.

RPA Orchestration with the RPA Bridge

We are proud to announce our very first out-of-the-box solution for RPA bot orchestration. With the RPA Bridge, you are able to easily connect and communicate RPA systems to Camunda’s Runtime engine.

In this first release, Camunda provides support for UiPath. Camunda will look to add formal support for other notable RPA vendors in future releases.

So what is it? The Spring Boot-based application serves as a connector between the Camunda BPM Runtime and the RPA vendor – the UiPath Orchestrator in this first increment. It uses the External Task pattern to enable orchestration of RPA bots and reports status updates and variables of the RPA bots back to the engine.

In a nutshell, what you will need for this to work:

- A UiPath Orchestrator instance, either

- On-Premises v2019 or v2020.4 or

- Automation Cloud

- Camunda BPM 7.14+

- A Camunda Enterprise License Key

- Java 8 or later installed on the machine that runs the RPA bridge

- A BPMN model with connection information to your RPA bot

Adding an RPA task to your BPMN model that can be consumed by the bridge is as easy as adding an External Task, containing one specific extension property named bot:

<bpmn:serviceTask id="myRPAtask" name="Launch the Robots" camunda:type="external" camunda:topic="RPA">

<bpmn:extensionElements>

<camunda:properties>

<camunda:property name="bot" value="UiPathProcessName" />

</camunda:properties>

</bpmn:extensionElements>

</bpmn:serviceTask>The bridge will pick up the external task, start an RPA bot in UiPath with the process UiPathProcessName and report back to the engine when the started bot is completed or failed for any reason.

With that, you can orchestrate all your RPA bots in an end-to-end-process and create greater overall visibility to your RPA workflows.

For detailed instructions on how to configure your bridge instance and further features like variable handling, head over to our official guide.

A Reworked Cockpit Plugin System

We are happy to announce an all new frontend plugin system for Cockpit. The new plugin system allows you to extend Cockpit with domain-specific information written in the web-technologies you are most familiar with, be it React, Angular, or just plain JavaScript.

The new plugin API allows for an easy, language-agnostic integration into Cockpit. Check out this “Hello World” plugin:

// plugin.js

export default {

id: "myDemoPlugin",

pluginPoint: "cockpit.dashboard",

priority: 10,

render: (node, { api }) => {

node.innerHTML = `Hello World!`;

}

};The heart of this plugin is render: (node, { api }), here is where we can extend Cockpit. The render function receives two arguments: a DOM node in which we can render our content, and an Object with additional information. What will be passed as additional information depends on the plugin point. On the Dashboard, we only receive the api Object, which contains REST endpoints and the CSRF-Token to make REST requests.

To register it, you simply save the file in your cockpit scripts folder. On Tomcat, this would be server/apache-tomcat-{version}/webapps/camunda/app/cockpit/scripts. Registering the plugin is done in the config.js by adding it to the customScripts field:

// config.js

export default {

customScripts: [

'scripts/plugin.js'

]

}Opening your browser and logging in should result in something like the picture below.

That is the new plugin system at a first glance!

You can learn all about it in the dedicated blogpost where we walk you through the development of a plugin that uses React.

Added Support for CockroachDB

We are shipping a first increment of CockroachDB support with this new version.

CockroachDB is a highly scalable SQL database that operates as a distributed system. It is built to automatically replicate, rebalance, and recover with minimal configuration using strongly-consistent replication as well as automated repair after failures. The database replicates your data for availability and guarantees consistency between replicas using the Raft consensus algorithm.

Due to different requirements and behavior compared to all other Camunda-supported databases, we have adjusted the process engine behavior and added some additional mechanisms to ensure that the process engine is able to operate correctly on it. We detailed all changes in behavior and new mechanisms in our configuration guide.

The key takeaways are:

- CockroachDB implements the

SERIALIZABLEtransaction isolation level, which is stricter than theREAD COMMITTEDlevel that the process engine usually operates on. Therefore, concurrency conflicts always abort transactions. We treat those by throwingOptimisticLockingExceptionso that the handling is the same as with concurrency conflicts of other databases. However, they may occur a bit more often. - For some APIs, we have built a CockroachDB-specific mechanism for transparent retries that can be customized via engine configuration.

Please consult the guide before migrating your process applications to CockroachDB, making sure that the known differences in behavior are well-covered by your setup and business logic.

New Batch Operation: Variable Updates

Especially when automating processes at scale, it sometimes becomes necessary to add or update data of already running process instances.

For example, when a user enters incorrect data at the beginning of a process, the data needs to be corrected on-the-fly. In Cockpit, this is already possible by setting or updating a variable during process runtime. While this feature is helpful, it becomes a repetitive task when the same variable needs to be set to many process instances at once.

With this release, we introduce a new feature to set variables to the root scope of process instances asynchronously. The new batch operation allows you to set one or more variables to a group of process instances that can be filtered according to several criteria.

If you want to dig deeper into this new batch operation, consult the Java API and REST API documentation.

Extension Properties in External Tasks

In the BPMN XML of a process definition, a service task can be declared to be performed by an external worker by setting the attribute camunda:type to external and defining a topic with camunda:topic. Passing on information to the external task worker can mainly be achieved by using process variables. Those variables are sent to the worker in the fetch and lock response.

However, having all worker-related data visible as variables is not desirable in all situations, e.g. when technical worker parameters are not as relevant to the business context.

With this new version, the engine adds another possibility of sending data to the worker with the help of extension properties:

<bpmn:serviceTask id="orderPizza" name="Order Pizza" camunda:type="external" camunda:topic="createOrder">

<bpmn:extensionElements>

<camunda:inputOutput>

<camunda:inputParameter name="type">${pizzaType}</camunda:inputParameter>

<camunda:outputParameter name="orderId">${createdOrderId}</camunda:outputParameter>

</camunda:inputOutput>

<camunda:properties>

<camunda:property name="defaultVendorId" value="1034" />

<camunda:property name="cancelExistingOrder" value="true" />

</camunda:properties>

</bpmn:extensionElements>

</bpmn:serviceTask>In case includeExtensionProperties is set to true in the request, all defined extension properties will be sent in the fetch and lock response, clearly separating technical parameters from business data:

[

{

"activityId": "orderPizza",

...

"topicName": "createOrder",

"variables": {

"type": {

"type": "String",

"value": "Margherita",

"valueInfo": {}

}

},

"extensionProperties": {

"defaultVendorId": "1034",

"cancelExistingOrder": "true"

}

}

]This aids in dividing such information into two different categories, allows for more fine-grained control over data visibility and helps separating rather technical concerns from business data.

History Cleanup Job Logs Cleanup

Cleaning up historic process data is an essential task in the administrative housekeeping of engine installations. The periodic cleanup job will take care of expired data and remove it. However, since this history cleanup itself is a job, it will produce historic data in the form of job log entries as well. Until now, it was not possible to define a history time-to-live for such entries in the historic job log table.

With the introduction of the historyCleanupJobLogTimeToLive property, administrators gain more control over their historic data. The property takes an ISO-8601 formatted duration String specifying a number of days (PT30D and PT1D is allowed while PT1H or PT5D10H is not).

Please note that this feature only works in conjunction with removal-time based history cleanup. More information about the new property and history cleanup configuration in general can be found in the documentation.

Added Open API Endpoints

We added Open API descriptions for the following REST API endpoints:

- Historic Process Instance

- Historic Activity Instance

- User

- Incident

To learn more about our Open API, visit our official documentation.

Updated Supported Environments

This new version also introduces more officially supported environments, namely:

- WildFly 20

- JBoss EAP 7.3

- SQL Server 2019

You can have a look at all currently supported environments in our official documentation.

Regarding the Spring ecosystem, we also made some notable changes:

- Spring Boot 2.3 support in the Camunda Spring Boot Starter

- End of Spring 3 support in the engine, have a look at our migration guide for further information

Camunda Needs You

Our product innovation is driven by you – our community – and we want to continue enhancing your experience by bringing you even better products and features. That’s why we’re introducing voluntary telemetry on a strict opt-in only basis. By opting into telemetry, you help us understand the typical environment setups and product usage patterns so we can make informed product improvement decisions for your benefit.

What kind of data does the telemetry service cover?

- Meta data – Product version, edition, database technology, JDK vendor and version, application server vendor and version, license key information if provided (customer name, expiry date, enabled features), and pseudonymized installation ID.

- Usage Statistics – Counts of performed API commands, counts of execution metrics (root process instances, started activity instances, executed decision instances, executed decision elements, unique task workers), and Camunda integration type (e.g., whether Spring Boot Starter, Camunda BPM Run, WildFly integration or EJB integration are used).

Please note, the telemetry service does not collect any personal identifiable or process related information.

An example of the data we collect looks roughly like this:

{

"installation": "8343cc7a-8ad1-42d4-97d2-43452c0bdfa3",

"product": {

"name": "Camunda BPM Runtime",

"version": "7.14.0",

"edition": "community",

"internals": {

"database": {

"vendor": "h2",

"version": "1.4.190 (2015-10-11)"

},

"application-server": {

"vendor": "WildFly",

"version": "WildFly Full 19.0.0.Final (WildFly Core 11.0.0.Final) - 2.0.30.Final"

},

"commands": {

"StartProcessInstanceCmd": {"count": 40},

"FetchExternalTasksCmd": {"count": 100}

},

"metrics": {

"root-process-instances": { "count": 936 },

"flow-node-instances": { "count": 6125 },

"executed-decision-instances": { "count": 732 },

"unique-task-workers": { "count": 50 }

}

}

}

}We are encouraging all users to allow Camunda BPM Runtime to send usage statistics. The information will be used to provide you with a stable and improved product experience in the technical environments you are using. By opting in, you will allow Camunda to collect information about the product version, technical environment, and which features you are using. As you can see above, the information is technical and aggregated.

In case this raises any concerns with you, we are encouraging you to consult our telemetry guide, where we provide further technical details on how this process works and how it can be customized to your specific needs.

And in case you don’t feel like sending such data to Camunda yet, we’ve got you covered as this is on a strict opt-in basis and will not be sent without your consent.

There’s Still More

There are many smaller features and bug fixes in the release that are not included in this blog post, but the full release notes provide the details.

Register for the Webinar

If you’re not already registered, be sure to secure a spot at the release webinar. You can register for free here.

Your Feedback Matters!

With every release, we strive to improve Camunda BPM, and we rely on your feedback! Feel free to share your ideas and suggestions with us.

You can contact us via the Camunda user forum.